Last Updated: 3/7/2026

Python

Get started

Capabilities

Production

LangGraph APIs

Memory

Add short-term memory

`from langgraph.checkpoint.memory import InMemorySaver from langgraph.graph import StateGraph

checkpointer = InMemorySaver()

builder = StateGraph(…) graph = builder.compile(checkpointer=checkpointer)

graph.invoke( {“messages”: [{“role”: “user”, “content”: “hi! i am Bob”}]}, {“configurable”: {“thread_id”: “1”}}, )`

Use in production

`from langgraph.checkpoint.postgres import PostgresSaver

DB_URI = “postgresql://postgres:postgres@localhost:5442/postgres?sslmode=disable” with PostgresSaver.from_conn_string(DB_URI) as checkpointer: builder = StateGraph(…) graph = builder.compile(checkpointer=checkpointer)`

Example: using Postgres checkpointer

pip install -U "psycopg[binary,pool]" langgraph langgraph-checkpoint-postgres

checkpointer.setup()

`from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.postgres import PostgresSaver

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

DB_URI = “postgresql://postgres:postgres@localhost:5442/postgres?sslmode=disable” with PostgresSaver.from_conn_string(DB_URI) as checkpointer:

checkpointer.setup()

def call_model(state: MessagesState): response = model.invoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile(checkpointer=checkpointer)

config = { “configurable”: { “thread_id”: “1” } }

for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()

for chunk in graph.stream(

{“messages”: [{“role”: “user”, “content”: “what’s my name?”}]},

config,

stream_mode=“values”

):

chunk[“messages”][-1].pretty_print() from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.postgres.aio import AsyncPostgresSaver

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

DB_URI = “postgresql://postgres:postgres@localhost:5442/postgres?sslmode=disable” async with AsyncPostgresSaver.from_conn_string(DB_URI) as checkpointer:

await checkpointer.setup()

async def call_model(state: MessagesState): response = await model.ainvoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile(checkpointer=checkpointer)

config = { “configurable”: { “thread_id”: “1” } }

async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()

async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “what’s my name?”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()`

Example: using MongoDB checkpointer

pip install -U pymongo langgraph langgraph-checkpoint-mongodb

`from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.mongodb import MongoDBSaver

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

DB_URI = “localhost:27017” with MongoDBSaver.from_conn_string(DB_URI) as checkpointer:

def call_model(state: MessagesState): response = model.invoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile(checkpointer=checkpointer)

config = { “configurable”: { “thread_id”: “1” } }

for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()

for chunk in graph.stream(

{“messages”: [{“role”: “user”, “content”: “what’s my name?”}]},

config,

stream_mode=“values”

):

chunk[“messages”][-1].pretty_print() from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.mongodb.aio import AsyncMongoDBSaver

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

DB_URI = “localhost:27017” async with AsyncMongoDBSaver.from_conn_string(DB_URI) as checkpointer:

async def call_model(state: MessagesState): response = await model.ainvoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile(checkpointer=checkpointer)

config = { “configurable”: { “thread_id”: “1” } }

async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()

async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “what’s my name?”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()`

Example: using Redis checkpointer

pip install -U langgraph langgraph-checkpoint-redis

checkpointer.setup()

`from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.redis import RedisSaver

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

DB_URI = “redis://localhost:6379” with RedisSaver.from_conn_string(DB_URI) as checkpointer:

checkpointer.setup()

def call_model(state: MessagesState): response = model.invoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile(checkpointer=checkpointer)

config = { “configurable”: { “thread_id”: “1” } }

for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()

for chunk in graph.stream(

{“messages”: [{“role”: “user”, “content”: “what’s my name?”}]},

config,

stream_mode=“values”

):

chunk[“messages”][-1].pretty_print() from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.redis.aio import AsyncRedisSaver

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

DB_URI = “redis://localhost:6379” async with AsyncRedisSaver.from_conn_string(DB_URI) as checkpointer:

await checkpointer.asetup()

async def call_model(state: MessagesState): response = await model.ainvoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile(checkpointer=checkpointer)

config = { “configurable”: { “thread_id”: “1” } }

async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()

async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “what’s my name?”}]}, config, stream_mode=“values” ): chunk[“messages”][-1].pretty_print()`

Use in subgraphs

`from langgraph.graph import START, StateGraph from langgraph.checkpoint.memory import InMemorySaver from typing import TypedDict

class State(TypedDict): foo: str

Subgraph

def subgraph_node_1(state: State): return {“foo”: state[“foo”] + “bar”}

subgraph_builder = StateGraph(State) subgraph_builder.add_node(subgraph_node_1) subgraph_builder.add_edge(START, “subgraph_node_1”) subgraph = subgraph_builder.compile()

Parent graph

builder = StateGraph(State) builder.add_node(“node_1”, subgraph) builder.add_edge(START, “node_1”)

checkpointer = InMemorySaver()

graph = builder.compile(checkpointer=checkpointer) subgraph_builder = StateGraph(…)

subgraph = subgraph_builder.compile(checkpointer=True)`

Add long-term memory

`from langgraph.store.memory import InMemoryStore from langgraph.graph import StateGraph

store = InMemoryStore()

builder = StateGraph(…) graph = builder.compile(store=store)`

Access the store inside nodes

Runtime

`from dataclasses import dataclass

from langgraph.runtime import Runtime

from langgraph.graph import StateGraph, MessagesState, START

import uuid

@dataclass class Context: user_id: str

async def call_model(state: MessagesState, runtime: Runtime[Context]): user_id = runtime.context.user_id namespace = (user_id, “memories”)

Search for relevant memories

memories = await runtime.store.asearch( namespace, query=state[“messages”][-1].content, limit=3 ) info = “\n”.join([d.value[“data”] for d in memories])

… Use memories in model call

Store a new memory

await runtime.store.aput( namespace, str(uuid.uuid4()), {“data”: “User prefers dark mode”} )

builder = StateGraph(MessagesState, context_schema=Context) builder.add_node(call_model) builder.add_edge(START, “call_model”) graph = builder.compile(store=store)

Pass context at invocation time

graph.invoke( {“messages”: [{“role”: “user”, “content”: “hi”}]}, {“configurable”: {“thread_id”: “1”}}, context=Context(user_id=“1”), )`

Use in production

`from langgraph.store.postgres import PostgresStore

DB_URI = “postgresql://postgres:postgres@localhost:5442/postgres?sslmode=disable” with PostgresStore.from_conn_string(DB_URI) as store: builder = StateGraph(…) graph = builder.compile(store=store)`

Example: using Postgres store

pip install -U "psycopg[binary,pool]" langgraph langgraph-checkpoint-postgres

store.setup()

`from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.postgres.aio import AsyncPostgresSaver

from langgraph.store.postgres.aio import AsyncPostgresStore

from langgraph.runtime import Runtime

import uuid

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

@dataclass class Context: user_id: str

async def call_model( state: MessagesState, runtime: Runtime[Context], ): user_id = runtime.context.user_id namespace = (“memories”, user_id) memories = await runtime.store.asearch(namespace, query=str(state[“messages”][-1].content)) info = “\n”.join([d.value[“data”] for d in memories]) system_msg = f”You are a helpful assistant talking to the user. User info: {info}“

Store new memories if the user asks the model to remember

last_message = state[“messages”][-1] if “remember” in last_message.content.lower(): memory = “User name is Bob” await runtime.store.aput(namespace, str(uuid.uuid4()), {“data”: memory})

response = await model.ainvoke( [{“role”: “system”, “content”: system_msg}] + state[“messages”] ) return {“messages”: response}

DB_URI = “postgresql://postgres:postgres@localhost:5442/postgres?sslmode=disable”

async with ( AsyncPostgresStore.from_conn_string(DB_URI) as store, AsyncPostgresSaver.from_conn_string(DB_URI) as checkpointer, ):

await store.setup()

await checkpointer.setup()

builder = StateGraph(MessagesState, context_schema=Context) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile( checkpointer=checkpointer, store=store, )

config = {“configurable”: {“thread_id”: “1”}} async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “Hi! Remember: my name is Bob”}]}, config, stream_mode=“values”, context=Context(user_id=“1”), ): chunk[“messages”][-1].pretty_print()

config = {“configurable”: {“thread_id”: “2”}}

async for chunk in graph.astream(

{“messages”: [{“role”: “user”, “content”: “what is my name?”}]},

config,

stream_mode=“values”,

context=Context(user_id=“1”),

):

chunk[“messages”][-1].pretty_print() from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.postgres import PostgresSaver

from langgraph.store.postgres import PostgresStore

from langgraph.runtime import Runtime

import uuid

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

@dataclass class Context: user_id: str

def call_model( state: MessagesState, runtime: Runtime[Context], ): user_id = runtime.context.user_id namespace = (“memories”, user_id) memories = runtime.store.search(namespace, query=str(state[“messages”][-1].content)) info = “\n”.join([d.value[“data”] for d in memories]) system_msg = f”You are a helpful assistant talking to the user. User info: {info}“

Store new memories if the user asks the model to remember

last_message = state[“messages”][-1] if “remember” in last_message.content.lower(): memory = “User name is Bob” runtime.store.put(namespace, str(uuid.uuid4()), {“data”: memory})

response = model.invoke( [{“role”: “system”, “content”: system_msg}] + state[“messages”] ) return {“messages”: response}

DB_URI = “postgresql://postgres:postgres@localhost:5442/postgres?sslmode=disable”

with ( PostgresStore.from_conn_string(DB_URI) as store, PostgresSaver.from_conn_string(DB_URI) as checkpointer, ):

store.setup()

checkpointer.setup()

builder = StateGraph(MessagesState, context_schema=Context) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile( checkpointer=checkpointer, store=store, )

config = {“configurable”: {“thread_id”: “1”}} for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “Hi! Remember: my name is Bob”}]}, config, stream_mode=“values”, context=Context(user_id=“1”), ): chunk[“messages”][-1].pretty_print()

config = {“configurable”: {“thread_id”: “2”}} for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “what is my name?”}]}, config, stream_mode=“values”, context=Context(user_id=“1”), ): chunk[“messages”][-1].pretty_print()`

Example: using Redis store

pip install -U langgraph langgraph-checkpoint-redis

store.setup()

`from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.redis.aio import AsyncRedisSaver

from langgraph.store.redis.aio import AsyncRedisStore

from langgraph.runtime import Runtime

import uuid

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

@dataclass class Context: user_id: str

async def call_model( state: MessagesState, runtime: Runtime[Context], ): user_id = runtime.context.user_id namespace = (“memories”, user_id) memories = await runtime.store.asearch(namespace, query=str(state[“messages”][-1].content)) info = “\n”.join([d.value[“data”] for d in memories]) system_msg = f”You are a helpful assistant talking to the user. User info: {info}“

Store new memories if the user asks the model to remember

last_message = state[“messages”][-1] if “remember” in last_message.content.lower(): memory = “User name is Bob” await runtime.store.aput(namespace, str(uuid.uuid4()), {“data”: memory})

response = await model.ainvoke( [{“role”: “system”, “content”: system_msg}] + state[“messages”] ) return {“messages”: response}

DB_URI = “redis://localhost:6379”

async with ( AsyncRedisStore.from_conn_string(DB_URI) as store, AsyncRedisSaver.from_conn_string(DB_URI) as checkpointer, ):

await store.setup()

await checkpointer.asetup()

builder = StateGraph(MessagesState, context_schema=Context) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile( checkpointer=checkpointer, store=store, )

config = {“configurable”: {“thread_id”: “1”}} async for chunk in graph.astream( {“messages”: [{“role”: “user”, “content”: “Hi! Remember: my name is Bob”}]}, config, stream_mode=“values”, context=Context(user_id=“1”), ): chunk[“messages”][-1].pretty_print()

config = {“configurable”: {“thread_id”: “2”}}

async for chunk in graph.astream(

{“messages”: [{“role”: “user”, “content”: “what is my name?”}]},

config,

stream_mode=“values”,

context=Context(user_id=“1”),

):

chunk[“messages”][-1].pretty_print() from dataclasses import dataclass

from langchain.chat_models import init_chat_model

from langgraph.graph import StateGraph, MessagesState, START

from langgraph.checkpoint.redis import RedisSaver

from langgraph.store.redis import RedisStore

from langgraph.runtime import Runtime

import uuid

model = init_chat_model(model=“claude-haiku-4-5-20251001”)

@dataclass class Context: user_id: str

def call_model( state: MessagesState, runtime: Runtime[Context], ): user_id = runtime.context.user_id namespace = (“memories”, user_id) memories = runtime.store.search(namespace, query=str(state[“messages”][-1].content)) info = “\n”.join([d.value[“data”] for d in memories]) system_msg = f”You are a helpful assistant talking to the user. User info: {info}“

Store new memories if the user asks the model to remember

last_message = state[“messages”][-1] if “remember” in last_message.content.lower(): memory = “User name is Bob” runtime.store.put(namespace, str(uuid.uuid4()), {“data”: memory})

response = model.invoke( [{“role”: “system”, “content”: system_msg}] + state[“messages”] ) return {“messages”: response}

DB_URI = “redis://localhost:6379”

with ( RedisStore.from_conn_string(DB_URI) as store, RedisSaver.from_conn_string(DB_URI) as checkpointer, ): store.setup() checkpointer.setup()

builder = StateGraph(MessagesState, context_schema=Context) builder.add_node(call_model) builder.add_edge(START, “call_model”)

graph = builder.compile( checkpointer=checkpointer, store=store, )

config = {“configurable”: {“thread_id”: “1”}} for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “Hi! Remember: my name is Bob”}]}, config, stream_mode=“values”, context=Context(user_id=“1”), ): chunk[“messages”][-1].pretty_print()

config = {“configurable”: {“thread_id”: “2”}} for chunk in graph.stream( {“messages”: [{“role”: “user”, “content”: “what is my name?”}]}, config, stream_mode=“values”, context=Context(user_id=“1”), ): chunk[“messages”][-1].pretty_print()`

Use semantic search

`from langchain.embeddings import init_embeddings from langgraph.store.memory import InMemoryStore

Create store with semantic search enabled

embeddings = init_embeddings(“openai:text-embedding-3-small”) store = InMemoryStore( index={ “embed”: embeddings, “dims”: 1536, } )

store.put((“user_123”, “memories”), “1”, {“text”: “I love pizza”}) store.put((“user_123”, “memories”), “2”, {“text”: “I am a plumber”})

items = store.search( (“user_123”, “memories”), query=“I’m hungry”, limit=1 )`

Long-term memory with semantic search

`from langchain.embeddings import init_embeddings from langchain.chat_models import init_chat_model from langgraph.store.memory import InMemoryStore from langgraph.graph import START, MessagesState, StateGraph from langgraph.runtime import Runtime

model = init_chat_model(“gpt-4.1-mini”)

Create store with semantic search enabled

embeddings = init_embeddings(“openai:text-embedding-3-small”) store = InMemoryStore( index={ “embed”: embeddings, “dims”: 1536, } )

store.put((“user_123”, “memories”), “1”, {“text”: “I love pizza”}) store.put((“user_123”, “memories”), “2”, {“text”: “I am a plumber”})

async def chat(state: MessagesState, runtime: Runtime):

Search based on user’s last message

items = await runtime.store.asearch( (“user_123”, “memories”), query=state[“messages”][-1].content, limit=2 ) memories = “\n”.join(item.value[“text”] for item in items) memories = f”## Memories of user\n{memories}” if memories else "" response = await model.ainvoke( [ {“role”: “system”, “content”: f”You are a helpful assistant.\n{memories}”}, *state[“messages”], ] ) return {“messages”: [response]}

builder = StateGraph(MessagesState) builder.add_node(chat) builder.add_edge(START, “chat”) graph = builder.compile(store=store)

async for message, metadata in graph.astream( input={“messages”: [{“role”: “user”, “content”: “I’m hungry”}]}, stream_mode=“messages”, ): print(message.content, end="")`

Manage short-term memory

Trim messages

strategy

max_tokens

trim_messages

`from langchain_core.messages.utils import (

trim_messages,

count_tokens_approximately

)

def call_model(state: MessagesState): messages = trim_messages( state[“messages”], strategy=“last”, token_counter=count_tokens_approximately, max_tokens=128, start_on=“human”, end_on=(“human”, “tool”), ) response = model.invoke(messages) return {“messages”: [response]}

builder = StateGraph(MessagesState) builder.add_node(call_model) …`

Full example: trim messages

`from langchain_core.messages.utils import ( trim_messages, count_tokens_approximately ) from langchain.chat_models import init_chat_model from langgraph.graph import StateGraph, START, MessagesState

model = init_chat_model(“claude-sonnet-4-5-20250929”) summarization_model = model.bind(max_tokens=128)

def call_model(state: MessagesState): messages = trim_messages( state[“messages”], strategy=“last”, token_counter=count_tokens_approximately, max_tokens=128, start_on=“human”, end_on=(“human”, “tool”), ) response = model.invoke(messages) return {“messages”: [response]}

checkpointer = InMemorySaver() builder = StateGraph(MessagesState) builder.add_node(call_model) builder.add_edge(START, “call_model”) graph = builder.compile(checkpointer=checkpointer)

config = {“configurable”: {“thread_id”: “1”}} graph.invoke({“messages”: “hi, my name is bob”}, config) graph.invoke({“messages”: “write a short poem about cats”}, config) graph.invoke({“messages”: “now do the same but for dogs”}, config) final_response = graph.invoke({“messages”: “what’s my name?”}, config)

final_response[“messages”][-1].pretty_print() ================================== Ai Message ==================================

Your name is Bob, as you mentioned when you first introduced yourself.`

Delete messages

RemoveMessage

RemoveMessage

add_messages

MessagesState

`from langchain.messages import RemoveMessage

def delete_messages(state): messages = state[“messages”] if len(messages) > 2:

remove the earliest two messages

return {“messages”: [RemoveMessage(id=m.id) for m in messages[:2]]} from langgraph.graph.message import REMOVE_ALL_MESSAGES

def delete_messages(state):

return {“messages”: [RemoveMessage(id=REMOVE_ALL_MESSAGES)]} user assistant tool`

Full example: delete messages

`from langchain.messages import RemoveMessage

def delete_messages(state): messages = state[“messages”] if len(messages) > 2:

remove the earliest two messages

return {“messages”: [RemoveMessage(id=m.id) for m in messages[:2]]}

def call_model(state: MessagesState): response = model.invoke(state[“messages”]) return {“messages”: response}

builder = StateGraph(MessagesState) builder.add_sequence([call_model, delete_messages]) builder.add_edge(START, “call_model”)

checkpointer = InMemorySaver() app = builder.compile(checkpointer=checkpointer)

for event in app.stream( {“messages”: [{“role”: “user”, “content”: “hi! I’m bob”}]}, config, stream_mode=“values” ): print([(message.type, message.content) for message in event[“messages”]])

for event in app.stream(

{“messages”: [{“role”: “user”, “content”: “what’s my name?”}]},

config,

stream_mode=“values”

):

print([(message.type, message.content) for message in event[“messages”]]) [(‘human’, “hi! I’m bob”)]

[(‘human’, “hi! I’m bob”), (‘ai’, ‘Hi Bob! How are you doing today? Is there anything I can help you with?’)]

[(‘human’, “hi! I’m bob”), (‘ai’, ‘Hi Bob! How are you doing today? Is there anything I can help you with?’), (‘human’, “what’s my name?”)]

[(‘human’, “hi! I’m bob”), (‘ai’, ‘Hi Bob! How are you doing today? Is there anything I can help you with?’), (‘human’, “what’s my name?”), (‘ai’, ‘Your name is Bob.’)]

[(‘human’, “what’s my name?”), (‘ai’, ‘Your name is Bob.’)]`

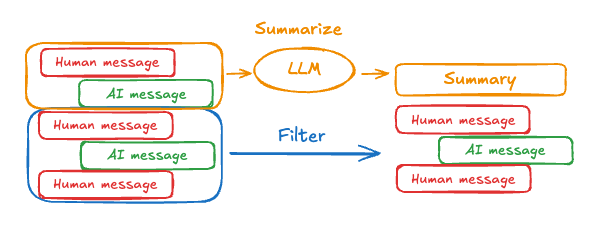

Summarize messages

MessagesState

summary

from langgraph.graph import MessagesState class State(MessagesState): summary: str

summarize_conversation

messages

`def summarize_conversation(state: State):

First, we get any existing summary

summary = state.get(“summary”, "")

Create our summarization prompt

if summary:

A summary already exists

summary_message = ( f”This is a summary of the conversation to date: {summary}\n\n” “Extend the summary by taking into account the new messages above:” )

else: summary_message = “Create a summary of the conversation above:“

Add prompt to our history

messages = state[“messages”] + [HumanMessage(content=summary_message)] response = model.invoke(messages)

Delete all but the 2 most recent messages

delete_messages = [RemoveMessage(id=m.id) for m in state[“messages”][:-2]] return {“summary”: response.content, “messages”: delete_messages}`

Full example: summarize messages

`from typing import Any, TypedDict

from langchain.chat_models import init_chat_model from langchain.messages import AnyMessage from langchain_core.messages.utils import count_tokens_approximately from langgraph.graph import StateGraph, START, MessagesState from langgraph.checkpoint.memory import InMemorySaver from langmem.short_term import SummarizationNode, RunningSummary

model = init_chat_model(“claude-sonnet-4-5-20250929”) summarization_model = model.bind(max_tokens=128)

class State(MessagesState): context: dict[str, RunningSummary]

class LLMInputState(TypedDict): summarized_messages: list[AnyMessage] context: dict[str, RunningSummary]

summarization_node = SummarizationNode( token_counter=count_tokens_approximately, model=summarization_model, max_tokens=256, max_tokens_before_summary=256, max_summary_tokens=128, )

def call_model(state: LLMInputState): response = model.invoke(state[“summarized_messages”]) return {“messages”: [response]}

checkpointer = InMemorySaver() builder = StateGraph(State) builder.add_node(call_model) builder.add_node(“summarize”, summarization_node) builder.add_edge(START, “summarize”) builder.add_edge(“summarize”, “call_model”) graph = builder.compile(checkpointer=checkpointer)

Invoke the graph

config = {“configurable”: {“thread_id”: “1”}} graph.invoke({“messages”: “hi, my name is bob”}, config) graph.invoke({“messages”: “write a short poem about cats”}, config) graph.invoke({“messages”: “now do the same but for dogs”}, config) final_response = graph.invoke({“messages”: “what’s my name?”}, config)

final_response[“messages”][-1].pretty_print()

print(“\nSummary:”, final_response[“context”][“running_summary”].summary) context SummarizationNode call_model ================================== Ai Message ==================================

From our conversation, I can see that you introduced yourself as Bob. That’s the name you shared with me when we began talking.

Summary: In this conversation, I was introduced to Bob, who then asked me to write a poem about cats. I composed a poem titled “The Mystery of Cats” that captured cats’ graceful movements, independent nature, and their special relationship with humans. Bob then requested a similar poem about dogs, so I wrote “The Joy of Dogs,” which highlighted dogs’ loyalty, enthusiasm, and loving companionship. Both poems were written in a similar style but emphasized the distinct characteristics that make each pet special.`

Manage checkpoints

View thread state

`config = { “configurable”: { “thread_id”: “1”,

optionally provide an ID for a specific checkpoint,

otherwise the latest checkpoint is shown

”checkpoint_id”: “1f029ca3-1f5b-6704-8004-820c16b69a5a”

}

}

graph.get_state(config) StateSnapshot(

values={‘messages’: [HumanMessage(content=“hi! I’m bob”), AIMessage(content=‘Hi Bob! How are you doing today?), HumanMessage(content=“what’s my name?”), AIMessage(content=‘Your name is Bob.’)]}, next=(),

config={‘configurable’: {‘thread_id’: ‘1’, ‘checkpoint_ns’: ”, ‘checkpoint_id’: ‘1f029ca3-1f5b-6704-8004-820c16b69a5a’}},

metadata={

‘source’: ‘loop’,

‘writes’: {‘call_model’: {‘messages’: AIMessage(content=‘Your name is Bob.’)}},

‘step’: 4,

‘parents’: {},

‘thread_id’: ‘1’

},

created_at=‘2025-05-05T16:01:24.680462+00:00’,

parent_config={‘configurable’: {‘thread_id’: ‘1’, ‘checkpoint_ns’: ”, ‘checkpoint_id’: ‘1f029ca3-1790-6b0a-8003-baf965b6a38f’}},

tasks=(),

interrupts=()

) config = {

“configurable”: {

“thread_id”: “1”,

optionally provide an ID for a specific checkpoint,

otherwise the latest checkpoint is shown

”checkpoint_id”: “1f029ca3-1f5b-6704-8004-820c16b69a5a”

}

}

checkpointer.get_tuple(config) CheckpointTuple(

config={‘configurable’: {‘thread_id’: ‘1’, ‘checkpoint_ns’: ”, ‘checkpoint_id’: ‘1f029ca3-1f5b-6704-8004-820c16b69a5a’}},

checkpoint={

‘v’: 3,

‘ts’: ‘2025-05-05T16:01:24.680462+00:00’,

‘id’: ‘1f029ca3-1f5b-6704-8004-820c16b69a5a’,

‘channel_versions’: {‘start’: ‘00000000000000000000000000000005.0.5290678567601859’, ‘messages’: ‘00000000000000000000000000000006.0.3205149138784782’, ‘branch:to:call_model’: ‘00000000000000000000000000000006.0.14611156755133758’}, ‘versions_seen’: {‘input’: {}, ‘start’: {‘start’: ‘00000000000000000000000000000004.0.5736472536395331’}, ‘call_model’: {‘branch:to:call_model’: ‘00000000000000000000000000000005.0.1410174088651449’}},

‘channel_values’: {‘messages’: [HumanMessage(content=“hi! I’m bob”), AIMessage(content=‘Hi Bob! How are you doing today?), HumanMessage(content=“what’s my name?”), AIMessage(content=‘Your name is Bob.’)]},

},

metadata={

‘source’: ‘loop’,

‘writes’: {‘call_model’: {‘messages’: AIMessage(content=‘Your name is Bob.’)}},

‘step’: 4,

‘parents’: {},

‘thread_id’: ‘1’

},

parent_config={‘configurable’: {‘thread_id’: ‘1’, ‘checkpoint_ns’: ”, ‘checkpoint_id’: ‘1f029ca3-1790-6b0a-8003-baf965b6a38f’}},

pending_writes=[]

)`

View the history of the thread

config = { "configurable": { "thread_id": "1" } } list(graph.get_state_history(config))

[ StateSnapshot( values={'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?'), HumanMessage(content="what's my name?"), AIMessage(content='Your name is Bob.')]}, next=(), config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-1f5b-6704-8004-820c16b69a5a'}}, metadata={'source': 'loop', 'writes': {'call_model': {'messages': AIMessage(content='Your name is Bob.')}}, 'step': 4, 'parents': {}, 'thread_id': '1'}, created_at='2025-05-05T16:01:24.680462+00:00', parent_config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-1790-6b0a-8003-baf965b6a38f'}}, tasks=(), interrupts=() ), StateSnapshot( values={'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?'), HumanMessage(content="what's my name?")]}, next=('call_model',), config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-1790-6b0a-8003-baf965b6a38f'}}, metadata={'source': 'loop', 'writes': None, 'step': 3, 'parents': {}, 'thread_id': '1'}, created_at='2025-05-05T16:01:23.863421+00:00', parent_config={...} tasks=(PregelTask(id='8ab4155e-6b15-b885-9ce5-bed69a2c305c', name='call_model', path=('__pregel_pull', 'call_model'), error=None, interrupts=(), state=None, result={'messages': AIMessage(content='Your name is Bob.')}),), interrupts=() ), StateSnapshot( values={'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')]}, next=('__start__',), config={...}, metadata={'source': 'input', 'writes': {'__start__': {'messages': [{'role': 'user', 'content': "what's my name?"}]}}, 'step': 2, 'parents': {}, 'thread_id': '1'}, created_at='2025-05-05T16:01:23.863173+00:00', parent_config={...} tasks=(PregelTask(id='24ba39d6-6db1-4c9b-f4c5-682aeaf38dcd', name='__start__', path=('__pregel_pull', '__start__'), error=None, interrupts=(), state=None, result={'messages': [{'role': 'user', 'content': "what's my name?"}]}),), interrupts=() ), StateSnapshot( values={'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')]}, next=(), config={...}, metadata={'source': 'loop', 'writes': {'call_model': {'messages': AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')}}, 'step': 1, 'parents': {}, 'thread_id': '1'}, created_at='2025-05-05T16:01:23.862295+00:00', parent_config={...} tasks=(), interrupts=() ), StateSnapshot( values={'messages': [HumanMessage(content="hi! I'm bob")]}, next=('call_model',), config={...}, metadata={'source': 'loop', 'writes': None, 'step': 0, 'parents': {}, 'thread_id': '1'}, created_at='2025-05-05T16:01:22.278960+00:00', parent_config={...} tasks=(PregelTask(id='8cbd75e0-3720-b056-04f7-71ac805140a0', name='call_model', path=('__pregel_pull', 'call_model'), error=None, interrupts=(), state=None, result={'messages': AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')}),), interrupts=() ), StateSnapshot( values={'messages': []}, next=('__start__',), config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-0870-6ce2-bfff-1f3f14c3e565'}}, metadata={'source': 'input', 'writes': {'__start__': {'messages': [{'role': 'user', 'content': "hi! I'm bob"}]}}, 'step': -1, 'parents': {}, 'thread_id': '1'}, created_at='2025-05-05T16:01:22.277497+00:00', parent_config=None, tasks=(PregelTask(id='d458367b-8265-812c-18e2-33001d199ce6', name='__start__', path=('__pregel_pull', '__start__'), error=None, interrupts=(), state=None, result={'messages': [{'role': 'user', 'content': "hi! I'm bob"}]}),), interrupts=() ) ]

config = { "configurable": { "thread_id": "1" } } list(checkpointer.list(config))

[ CheckpointTuple( config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-1f5b-6704-8004-820c16b69a5a'}}, checkpoint={ 'v': 3, 'ts': '2025-05-05T16:01:24.680462+00:00', 'id': '1f029ca3-1f5b-6704-8004-820c16b69a5a', 'channel_versions': {'__start__': '00000000000000000000000000000005.0.5290678567601859', 'messages': '00000000000000000000000000000006.0.3205149138784782', 'branch:to:call_model': '00000000000000000000000000000006.0.14611156755133758'}, 'versions_seen': {'__input__': {}, '__start__': {'__start__': '00000000000000000000000000000004.0.5736472536395331'}, 'call_model': {'branch:to:call_model': '00000000000000000000000000000005.0.1410174088651449'}}, 'channel_values': {'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?'), HumanMessage(content="what's my name?"), AIMessage(content='Your name is Bob.')]}, }, metadata={'source': 'loop', 'writes': {'call_model': {'messages': AIMessage(content='Your name is Bob.')}}, 'step': 4, 'parents': {}, 'thread_id': '1'}, parent_config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-1790-6b0a-8003-baf965b6a38f'}}, pending_writes=[] ), CheckpointTuple( config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-1790-6b0a-8003-baf965b6a38f'}}, checkpoint={ 'v': 3, 'ts': '2025-05-05T16:01:23.863421+00:00', 'id': '1f029ca3-1790-6b0a-8003-baf965b6a38f', 'channel_versions': {'__start__': '00000000000000000000000000000005.0.5290678567601859', 'messages': '00000000000000000000000000000006.0.3205149138784782', 'branch:to:call_model': '00000000000000000000000000000006.0.14611156755133758'}, 'versions_seen': {'__input__': {}, '__start__': {'__start__': '00000000000000000000000000000004.0.5736472536395331'}, 'call_model': {'branch:to:call_model': '00000000000000000000000000000005.0.1410174088651449'}}, 'channel_values': {'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?'), HumanMessage(content="what's my name?")], 'branch:to:call_model': None} }, metadata={'source': 'loop', 'writes': None, 'step': 3, 'parents': {}, 'thread_id': '1'}, parent_config={...}, pending_writes=[('8ab4155e-6b15-b885-9ce5-bed69a2c305c', 'messages', AIMessage(content='Your name is Bob.'))] ), CheckpointTuple( config={...}, checkpoint={ 'v': 3, 'ts': '2025-05-05T16:01:23.863173+00:00', 'id': '1f029ca3-1790-616e-8002-9e021694a0cd', 'channel_versions': {'__start__': '00000000000000000000000000000004.0.5736472536395331', 'messages': '00000000000000000000000000000003.0.7056767754077798', 'branch:to:call_model': '00000000000000000000000000000003.0.22059023329132854'}, 'versions_seen': {'__input__': {}, '__start__': {'__start__': '00000000000000000000000000000001.0.7040775356287469'}, 'call_model': {'branch:to:call_model': '00000000000000000000000000000002.0.9300422176788571'}}, 'channel_values': {'__start__': {'messages': [{'role': 'user', 'content': "what's my name?"}]}, 'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')]} }, metadata={'source': 'input', 'writes': {'__start__': {'messages': [{'role': 'user', 'content': "what's my name?"}]}}, 'step': 2, 'parents': {}, 'thread_id': '1'}, parent_config={...}, pending_writes=[('24ba39d6-6db1-4c9b-f4c5-682aeaf38dcd', 'messages', [{'role': 'user', 'content': "what's my name?"}]), ('24ba39d6-6db1-4c9b-f4c5-682aeaf38dcd', 'branch:to:call_model', None)] ), CheckpointTuple( config={...}, checkpoint={ 'v': 3, 'ts': '2025-05-05T16:01:23.862295+00:00', 'id': '1f029ca3-178d-6f54-8001-d7b180db0c89', 'channel_versions': {'__start__': '00000000000000000000000000000002.0.18673090920108737', 'messages': '00000000000000000000000000000003.0.7056767754077798', 'branch:to:call_model': '00000000000000000000000000000003.0.22059023329132854'}, 'versions_seen': {'__input__': {}, '__start__': {'__start__': '00000000000000000000000000000001.0.7040775356287469'}, 'call_model': {'branch:to:call_model': '00000000000000000000000000000002.0.9300422176788571'}}, 'channel_values': {'messages': [HumanMessage(content="hi! I'm bob"), AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')]} }, metadata={'source': 'loop', 'writes': {'call_model': {'messages': AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?')}}, 'step': 1, 'parents': {}, 'thread_id': '1'}, parent_config={...}, pending_writes=[] ), CheckpointTuple( config={...}, checkpoint={ 'v': 3, 'ts': '2025-05-05T16:01:22.278960+00:00', 'id': '1f029ca3-0874-6612-8000-339f2abc83b1', 'channel_versions': {'__start__': '00000000000000000000000000000002.0.18673090920108737', 'messages': '00000000000000000000000000000002.0.30296526818059655', 'branch:to:call_model': '00000000000000000000000000000002.0.9300422176788571'}, 'versions_seen': {'__input__': {}, '__start__': {'__start__': '00000000000000000000000000000001.0.7040775356287469'}}, 'channel_values': {'messages': [HumanMessage(content="hi! I'm bob")], 'branch:to:call_model': None} }, metadata={'source': 'loop', 'writes': None, 'step': 0, 'parents': {}, 'thread_id': '1'}, parent_config={...}, pending_writes=[('8cbd75e0-3720-b056-04f7-71ac805140a0', 'messages', AIMessage(content='Hi Bob! How are you doing today? Is there anything I can help you with?'))] ), CheckpointTuple( config={'configurable': {'thread_id': '1', 'checkpoint_ns': '', 'checkpoint_id': '1f029ca3-0870-6ce2-bfff-1f3f14c3e565'}}, checkpoint={ 'v': 3, 'ts': '2025-05-05T16:01:22.277497+00:00', 'id': '1f029ca3-0870-6ce2-bfff-1f3f14c3e565', 'channel_versions': {'__start__': '00000000000000000000000000000001.0.7040775356287469'}, 'versions_seen': {'__input__': {}}, 'channel_values': {'__start__': {'messages': [{'role': 'user', 'content': "hi! I'm bob"}]}} }, metadata={'source': 'input', 'writes': {'__start__': {'messages': [{'role': 'user', 'content': "hi! I'm bob"}]}}, 'step': -1, 'parents': {}, 'thread_id': '1'}, parent_config=None, pending_writes=[('d458367b-8265-812c-18e2-33001d199ce6', 'messages', [{'role': 'user', 'content': "hi! I'm bob"}]), ('d458367b-8265-812c-18e2-33001d199ce6', 'branch:to:call_model', None)] ) ]

Delete all checkpoints for a thread

thread_id = "1" checkpointer.delete_thread(thread_id)

Database management

setup()

BaseCheckpointSaver

BaseStore

Was this page helpful?

Resources

Company